Original: Simon Willison · 12/02/2026

Summary

an ultra-fast model for real-time coding in Codex OpenAI announced a partnership with Cerebras on January 14th. Four weeks later they’re already launching the first integration, “an ultra-fast model for real-time coding in Codex”.Key Insights

“an ultra-fast model for real-time coding in Codex” — Describing the main feature of GPT-5.3-Codex-Spark.

“at launch, Codex-Spark has a 128k context window and is text-only.” — Clarifying the initial capabilities of Codex-Spark.

“When a model responds this fast you can stay in flow state and iterate with the model much more productively.” — Highlighting the benefits of the model’s speed for coding productivity.

Topics

Full Article

Published: 2026-02-12

Source: https://simonwillison.net/2026/Feb/12/codex-spark/#atom-everything

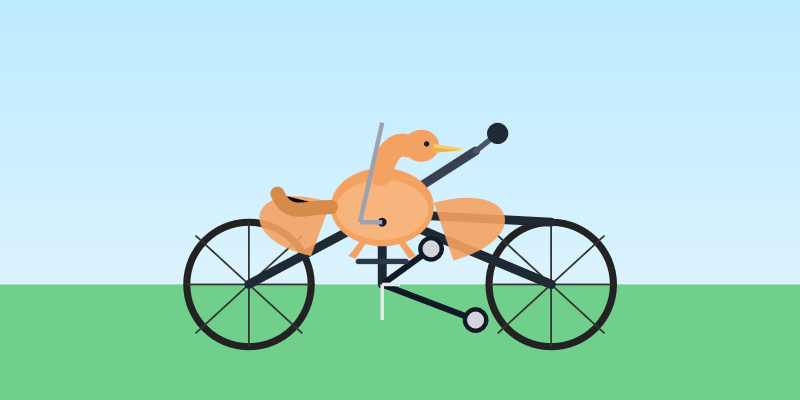

Introducing GPT‑5.3‑Codex‑Spark OpenAI announced a partnership with Cerebras on January 14th. Four weeks later they’re already launching the first integration, “an ultra-fast model for real-time coding in Codex”. Despite being named GPT-5.3-Codex-Spark it’s not purely an accelerated alternative to GPT-5.3-Codex - the blog post calls it “a smaller version of GPT‑5.3-Codex” and clarifies that “at launch, Codex-Spark has a 128k context window and is text-only.” I had some preview access to this model and I can confirm that it’s significantly faster than their other models. Here’s what that speed looks like running in Codex CLI:

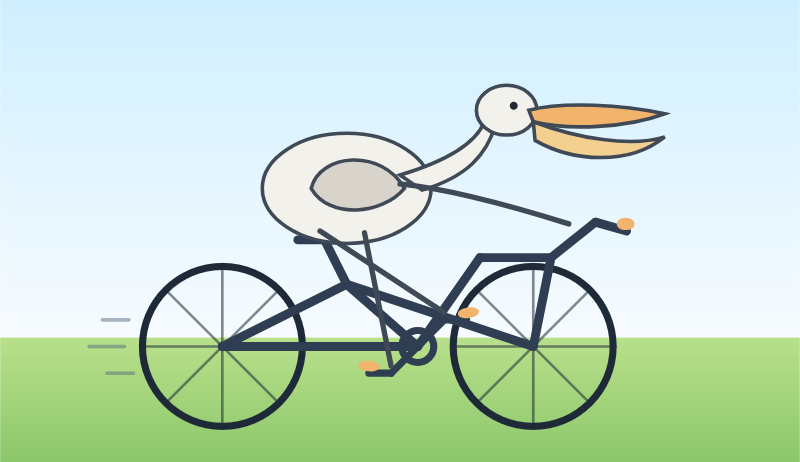

Compare that to the speed of regular GPT-5.3 Codex medium:

Compare that to the speed of regular GPT-5.3 Codex medium:

What’s interesting about this model isn’t the quality though, it’s the speed. When a model responds this fast you can stay in flow state and iterate with the model much more productively.

I showed a demo of Cerebras running Llama 3.1 70 B at 2,000 tokens/second against Val Town back in October 2024. OpenAI claim 1,000 tokens/second for their new model, and I expect it will prove to be a ferociously useful partner for hands-on iterative coding sessions.

It’s not yet clear what the pricing will look like for this new model.

What’s interesting about this model isn’t the quality though, it’s the speed. When a model responds this fast you can stay in flow state and iterate with the model much more productively.

I showed a demo of Cerebras running Llama 3.1 70 B at 2,000 tokens/second against Val Town back in October 2024. OpenAI claim 1,000 tokens/second for their new model, and I expect it will prove to be a ferociously useful partner for hands-on iterative coding sessions.

It’s not yet clear what the pricing will look like for this new model.

Key Takeaways

Notable Quotes

an ultra-fast model for real-time coding in CodexContext: Describing the main feature of GPT-5.3-Codex-Spark.

at launch, Codex-Spark has a 128k context window and is text-only.Context: Clarifying the initial capabilities of Codex-Spark.

When a model responds this fast you can stay in flow state and iterate with the model much more productively.Context: Highlighting the benefits of the model’s speed for coding productivity.

Related Topics

- [[topics/openai-api]]

- [[topics/prompt-engineering]]

- [[topics/ai-agents]]

Related Articles

Introducing the Codex app

Simon Willison · explanation · 77% similar

OpenAI Gave Us a Glimpse Into Their AI Coding Playbook

Dan Shipper (Every) · explanation · 71% similar

[AINews] GPT 5.4: SOTA Knowledge Work -and- Coding -and- CUA Model, OpenAI is so very back

Swyx · explanation · 70% similar

Originally published at https://simonwillison.net/2026/Feb/12/codex-spark/#atom-everything.